Originally published via Armageddon Prose:

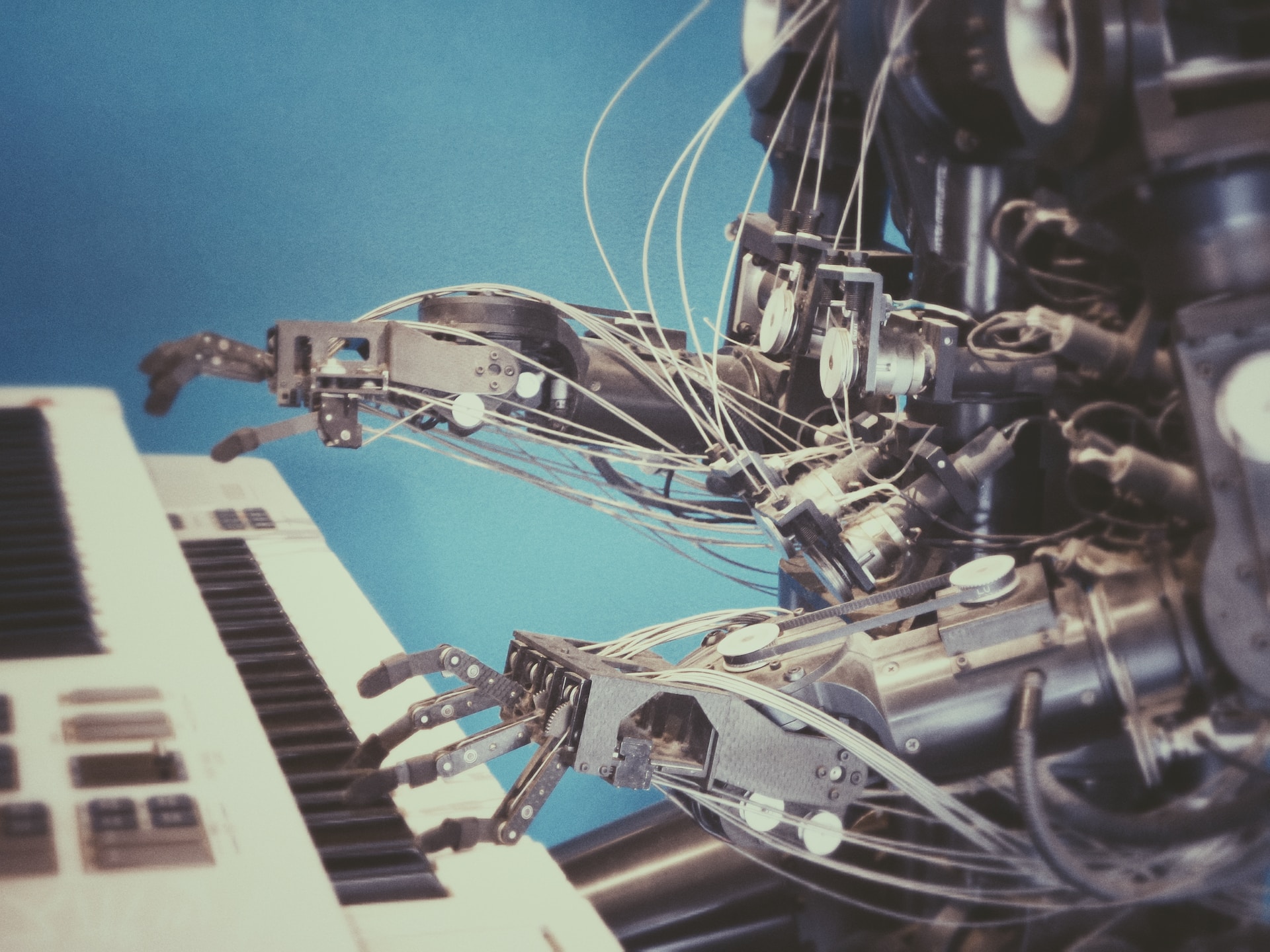

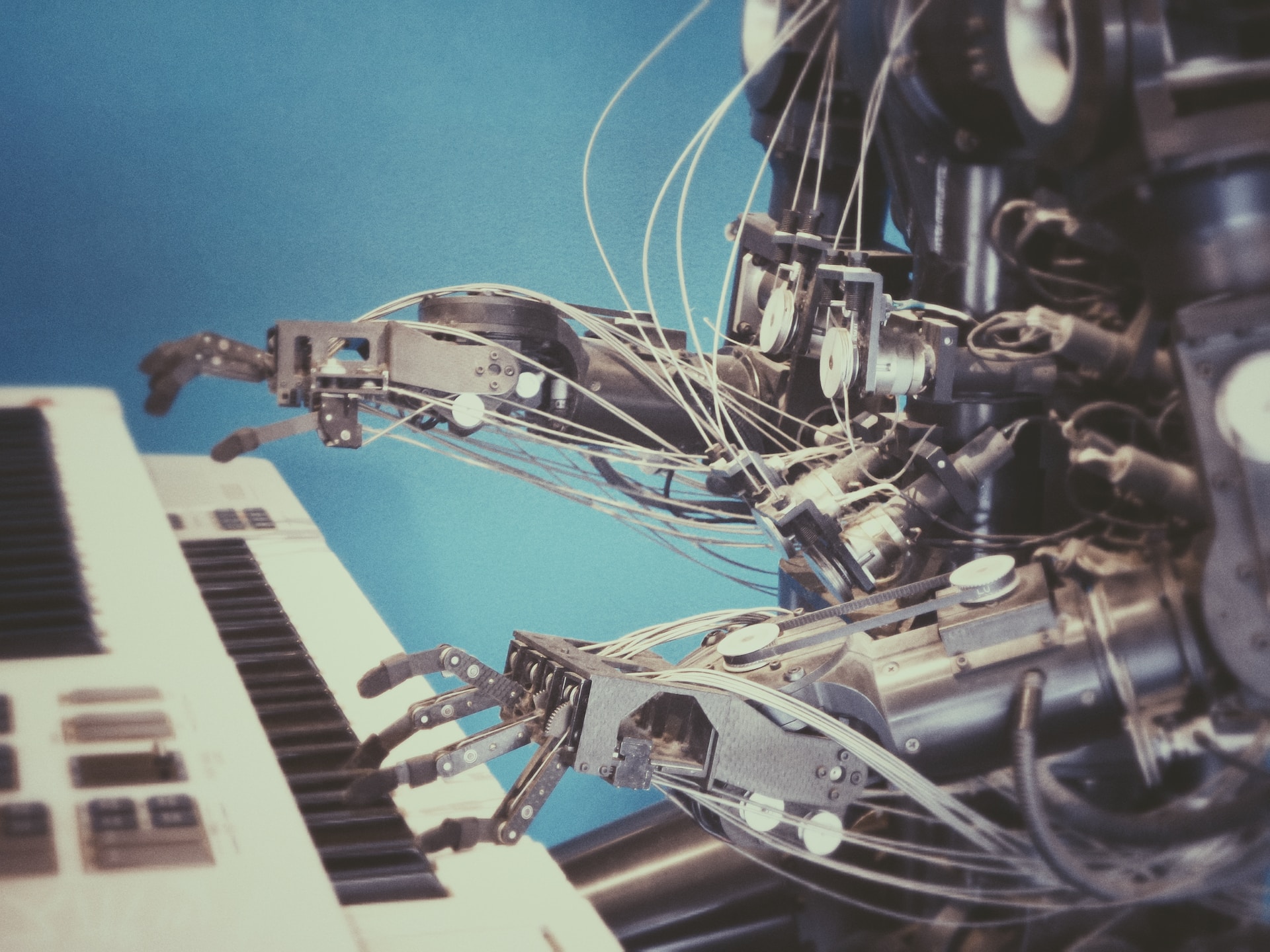

Surveying the latest transgressions against decency, morality, and humanity itself by artificial intelligence and its biological architects.

The major nuclear powers of the world now entrust decision-making to the digital bowels of artificial intelligence (immune to radioactive fallout, by the way), which (at best) harbors a moral indifference to humanity’s continued existence and, more likely, views it as a nuisance.

Via Nikkei Asia:

“The Biden administration plans to encourage China to work with the U.S. on international norms for artificial intelligence in weapons systems, a potential new area of cooperation amid tensions between the two powers.

U.S. President Joe Biden’s administration has warned against relying on AI to make decisions involving the use of nuclear weapons and argues that humans should be involved in all critical decisions.“We’ve seen through history numerous examples of where decision-making could be challenged by not having a human understanding the context of the technology, understanding the background of its use, and really not being able to make the most informed decision,” said Stewart, who suggested that maintaining human involvement in nuclear operations would be in China’s best interest.

The issue of AI use with nuclear weapons would also require discussion with Russia, which continues with its war in Ukraine. Russia has suspended its participation in the last remaining bilateral nuclear arms control arrangement known as New START amid heightened tensions with the West.”

Let’s be honest: the U.S. government is the epicenter of the AI takeover. Anything it accuses China of doing in this domain, including endowing AI with the decision-making capacity to drop nukes, it likely has already or is in the process of doing itself.

The entire point of mutually assured destruction (MAD) doctrine — the sole reason that Earth is still (somewhat) inhabitable in 2023 — is that the human members of human nations would not fire nukes against other nuclear powers because they would understand that it would result in a global catastrophe that they would not likely survive, either as a state or as individuals.

And it’s not just nukes that AI can be used to develop and deploy.

Via Foreign Affairs:

“When drug researchers used AI to develop 40,000 potential biochemical weapons in less than six hours last year, they demonstrated how relatively simple AI systems can be easily adjusted to devastating effect. Sophisticated AI-powered cyberattacks could likewise go haywire, indiscriminately derailing critical systems that societies depend on, not unlike the infamous NotPetya attack, which Russia launched against Ukraine in 2017 but eventually infected computers across the globe. Despite these warning signs, AI technology continues to advance at breakneck speed, causing the safety risks to multiply faster than solutions can be created.”

The regulatory attempts by governments to constrain AI — such as they are — is a giant game of Whac-a-Mole. There is no putting this genie back in the bottle, and it’s certainly not going to happen under the leadership of the Brandon entity, no matter how full of amphetamine they pump him, or future President Karamel-uh, whose only expertise is in whose dick she has to suck to get her next promotion.

Related: What the Unabomber Got Right

This is the pharmaceutical- über-alles ideology at work.

To these people, there exists no problem that can’t be solved at the tip of a needle.

Via Japan Today:

“Scientists in Brazil, the world’s second-biggest consumer of cocaine, have announced the development of an innovative new treatment for addiction to the drug and its powerful derivative crack: a vaccine.

Dubbed “Calixcoca,” the test vaccine, which has shown promising results in trials on animals, triggers an immune response that blocks cocaine and crack from reaching the brain, which researchers hope will help users break the cycle of addiction.

Put simply, addicts would no longer get high from the drug.

If the treatment gets regulatory approval, it would be the first time cocaine addiction is treated using a vaccine, said psychiatrist Frederico Garcia, coordinator of the team that developed the treatment at the Federal University of Minas Gerais.

The project won top prize last week – 500,000 euros ($530,000) – at the Euro Health Innovation awards for Latin American medicine, sponsored by pharmaceutical firm Eurofarma.”

Moving forward, literally every social, psychological, cultural, and obviously physical ailment will come with an FDA-approved “vaccine” to treat it.

Look, bigot, you’re just going to have to come to terms with the new reality that, on occasion, cars driven by AI are simply going to mow down grandmothers and children in their way.

This is the price of Progress™.

Anyway, it’s their human fault for being small and feeble. If God wanted them to survive the Brave New World, he would’ve fashioned their bones out of titanium instead of calcium — or even better, rendered their consciousness ethereal and non-corporal in his own image like he did with our new AI overlords.

Via The Intercept:

“AV companies hope these driverless vehicles will replace not just Uber, but also human driving as we know it. The underlying technology, however, is still half-baked and error-prone, giving rise to widespread criticisms that companies like Cruise are essentially running beta tests on public streets.

Despite the popular skepticism, Cruise insists its robots are profoundly safer than what they’re aiming to replace: cars driven by people. In an interview last month, Cruise CEO Kyle Vogt downplayed safety concerns: “Anything that we do differently than humans is being sensationalized.”

The concerns over Cruise cars came to a head this month. On October 17, the National Highway Traffic Safety Administration announced it was investigating Cruise’s nearly 600-vehicle fleet because of risks posed to other cars and pedestrians. A week later, in San Francisco, where driverless Cruise cars have shuttled passengers since 2021, the California Department of Motor Vehicles announced it was suspending the company’s driverless operations. Following a string of highly public malfunctions and accidents, the immediate cause of the order, the DMV said, was that Cruise withheld footage from a recent incident in which one of its vehicles hit a pedestrian, dragging her 20 feet down the road.

Even before its public relations crisis of recent weeks, though, previously unreported internal materials such as chat logs show Cruise has known internally about two pressing safety issues: Driverless Cruise cars struggled to detect large holes in the road and have so much trouble recognizing children in certain scenarios that they risked hitting them. Yet, until it came under fire this month, Cruise kept its fleet of driverless taxis active, maintaining its regular reassurances of superhuman safety.

“[Cruise’s] internal materials attribute the robot cars’ inability to reliably recognize children under certain conditions to inadequate software and testing. “We have low exposure to small VRUs” — Vulnerable Road Users, a reference to children — “so very few events to estimate risk from,” the materials say. Another section concedes Cruise vehicles’ “lack of a high-precision Small VRU classifier,” or machine learning software that would automatically detect child-shaped objects around the car and maneuver accordingly. The materials say Cruise, in an attempt to compensate for machine learning shortcomings, was relying on human workers behind the scenes to manually identify children encountered by AVs where its software couldn’t do so automatically.”

Ben Bartee, author of Broken English Teacher: Notes From Exile, is an independent Bangkok-based American journalist with opposable thumbs.

Follow his stuff Substack if you are inclined to support independent journalism free of corporate slant. Also, keep tabs via Twitter.

For hip Armageddon Prose t-shirts, hats, etc., peruse the merch store.

Insta-tip jar and Bitcoin public address: bc1qvq4hgnx3eu09e0m2kk5uanxnm8ljfmpefwhawv